第五章 搭建maven工程及测试

搭建maven工程及测试

5.1 准备pom文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.atguigu.flink</groupId>

<artifactId>flink</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.11</artifactId>

<version>1.7.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-streaming-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.7.0</version>

</dependency>

</dependencies>

<build>

<plugins>

<!-- 该插件用于将Scala代码编译成class文件 -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.4.6</version>

<executions>

<execution>

<!-- 声明绑定到maven的compile阶段 -->

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

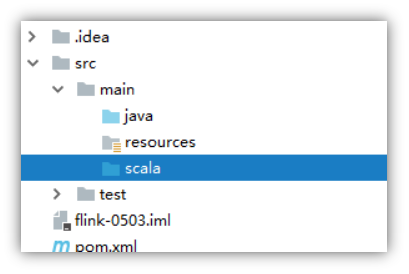

</project>5.2 添加scala框架 和 scala文件夹

5.3 批处理wordcount

def main(args: Array[String]): Unit = {

//构造执行环境

val env: ExecutionEnvironment = ExecutionEnvironment.getExecutionEnvironment

//读取文件

val input = "file:///d:/temp/hello.txt"

val ds: DataSet[String] = env.readTextFile(input)

// 其中flatMap 和Map 中 需要引入隐式转换

import org.apache.flink.api.scala.createTypeInformation

//经过groupby进行分组,sum进行聚合

val aggDs: AggregateDataSet[(String, Int)] = ds.flatMap(_.split(" ")).map((_, 1)).groupBy(0).sum(1)

// 打印

aggDs.print()

}注意:Flink程序支持java 和 scala两种语言,本课程中以scala语言为主。

在引入包中,有java和scala两种包时注意要使用scala的包

5.4 流处理 wordcount

import org.apache.flink.api.java.utils.ParameterTool

import org.apache.flink.streaming.api.scala.{DataStream, StreamExecutionEnvironment}

object StreamWcApp {

def main(args: Array[String]): Unit = {

//从外部命令中获取参数

val tool: ParameterTool = ParameterTool.fromArgs(args)

val host: String = tool.get("host")

val port: Int = tool.get("port").toInt

//创建流处理环境

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

//接收socket文本流

val textDstream: DataStream[String] = env.socketTextStream(host,port)

// flatMap和Map需要引用的隐式转换

import org.apache.flink.api.scala._

//处理 分组并且sum聚合

val dStream: DataStream[(String, Int)] = textDstream.flatMap(_.split(" ")).filter(_.nonEmpty).map((_,1)).keyBy(0).sum(1)

//打印

dStream.print()

env.execute()

}5.5 测试

在linux系统中用

nc -lk 7777

进行发送测试