nn.AdaptiveAvgPool2d() 与 nn.AvgPool2d() 模块各自的用途

首先介绍一下我为啥会关注到这个问题,因为我在使用加了SE模块的Resnet的时候,程序出bug了,原来问题出在我使用了nn.AvgPool2d() ,而应该使用nn.AdaptiveAvgPool2d() 。

1. 问题

下边是我写的SEResnet模块的代码,然后我会贴出我程序报的错误:

class SEResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(SEResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

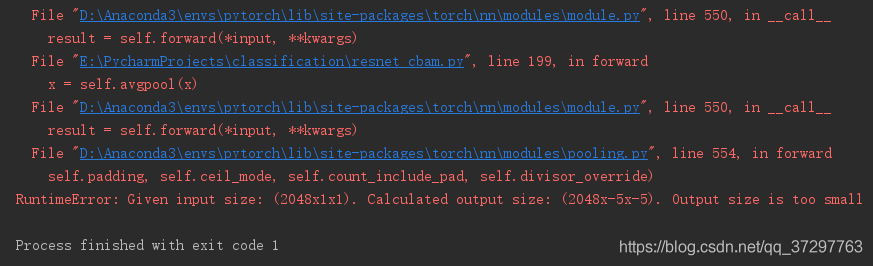

以上是部分程序,运行完主程序后,报错如下:

RuntimeError: Given input size: (2048x1x1). Calculated output size: (2048x-5x-5). Output size is too small.

这个错误的意思可以理解为:给定的输入大小是2048x1x1,计算的输出大小是2048x-5x-5,输出都成负数了,太小了。也就是在1*1的图片上(也就是一个像素点)不可能使用7x7的kernel进行池化。

2. 解决方案

修改上述代码的第14行代码。将self.avgpool = nn.AvgPool2d(7, stride=1)改为self.avgpool = nn.AdaptiveAvgPool2d(1).

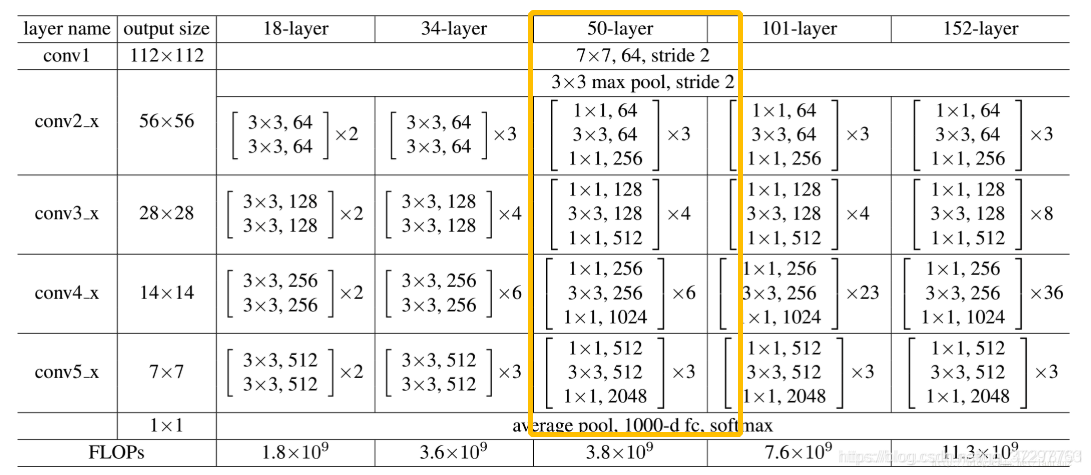

当然这样修改不是盲目的,我看了一下Resnet的源码,源码中也是这么写的self.avgpool = nn.AdaptiveAvgPool2d(1),其实这一步骤可以理解为全局平均池化,那为什么使用77的平均池化会出bug呢,因为在进行平均池化操作之前,图片的大小坑你已经变成1x1的了,再使用大的kernel肯定是不行的,其实如果是用普通的Resnet在ImageNet数据集或者其他输入图片大小为224224的时候就不会出bug,因为在第4个layer之后,图片大小为7x7,使用7x7的kernel做池化刚刚好!

上图是借用的别人的哦!

上述为我的个人见解,有啥问题请各位大佬批评指正!